[ad_1]

Maintaining with an business as fast-moving as AI is a tall order. So till an AI can do it for you, right here’s a helpful roundup of current tales on this planet of machine studying, together with notable analysis and experiments we didn’t cowl on their very own.

This week, Meta launched the newest in its Llama sequence of generative AI fashions: Llama 3 8B and Llama 3 70B. Able to analyzing and writing textual content, the fashions are “open sourced,” Meta mentioned — meant to be a “foundational piece” of methods that builders design with their distinctive targets in thoughts.

“We consider these are the most effective open supply fashions of their class, interval,” Meta wrote in a weblog put up. “We’re embracing the open supply ethos of releasing early and sometimes.”

There’s just one drawback: the Llama 3 fashions aren’t actually “open supply,” a minimum of not within the strictest definition.

Open supply implies that builders can use the fashions how they select, unfettered. However within the case of Llama 3 — as with Llama 2 — Meta has imposed sure licensing restrictions. For instance, Llama fashions can’t be used to coach different fashions. And app builders with over 700 million month-to-month customers should request a particular license from Meta.

Debates over the definition of open supply aren’t new. However as firms within the AI area play quick and free with the time period, it’s injecting gas into long-running philosophical arguments.

Final August, a examine co-authored by researchers at Carnegie Mellon, the AI Now Institute and the Sign Basis discovered that many AI fashions branded as “open supply” include huge catches — not simply Llama. The information required to coach the fashions is stored secret. The compute energy wanted to run them is past the attain of many builders. And the labor to fine-tune them is prohibitively costly.

So if these fashions aren’t really open supply, what are they, precisely? That’s an excellent query; defining open supply with respect to AI isn’t a straightforward job.

One pertinent unresolved query is whether or not copyright, the foundational IP mechanism open supply licensing relies on, might be utilized to the varied elements and items of an AI challenge, particularly a mannequin’s interior scaffolding (e.g. embeddings). Then, there’s the mismatch between the notion of open supply and the way AI really capabilities to beat: open supply was devised partially to make sure that builders might examine and modify code with out restrictions. With AI, although, which components you have to do the finding out and modifying is open to interpretation.

Wading by all of the uncertainty, the Carnegie Mellon examine does clarify the hurt inherent in tech giants like Meta co-opting the phrase “open supply.”

Usually, “open supply” AI tasks like Llama find yourself kicking off information cycles — free advertising — and offering technical and strategic advantages to the tasks’ maintainers. The open supply group hardly ever sees these similar advantages, and, once they do, they’re marginal in comparison with the maintainers’.

As an alternative of democratizing AI, “open supply” AI tasks — particularly these from huge tech firms — are likely to entrench and develop centralized energy, say the examine’s co-authors. That’s good to remember the following time a serious “open supply” mannequin launch comes round.

Listed here are another AI tales of observe from the previous few days:

- Meta updates its chatbot: Coinciding with the Llama 3 debut, Meta upgraded its AI chatbot throughout Fb, Messenger, Instagram and WhatsApp — Meta AI — with a Llama 3-powered backend. It additionally launched new options, together with sooner picture era and entry to internet search outcomes.

- AI-generated porn: Ivan writes about how the Oversight Board, Meta’s semi-independent coverage council, is popping its consideration to how the corporate’s social platforms are dealing with specific, AI-generated pictures.

- Snap watermarks: Social media service Snap plans so as to add watermarks to AI-generated pictures on its platform. A translucent model of the Snap brand with a sparkle emoji, the brand new watermark will likely be added to any AI-generated picture exported from the app or saved to the digicam roll.

- The brand new Atlas: Hyundai-owned robotics firm Boston Dynamics has unveiled its next-generation humanoid Atlas robotic, which, in distinction to its hydraulics-powered predecessor, is all-electric — and far friendlier in look.

- Humanoids on humanoids: To not be outdone by Boston Dynamics, the founding father of Mobileye, Amnon Shashua, has launched a brand new startup, Menteebot, centered on constructing bibedal robotics methods. A demo video reveals Menteebot’s prototype strolling over to a desk and selecting up fruit.

- Reddit, translated: In an interview with Amanda, Reddit CPO Pali Bhat revealed that an AI-powered language translation characteristic to carry the social community to a extra world viewers is within the works, together with an assistive moderation device educated on Reddit moderators’ previous choices and actions.

- AI-generated LinkedIn content material: LinkedIn has quietly began testing a brand new approach to increase its revenues: a LinkedIn Premium Firm Web page subscription, which — for charges that seem like as steep as $99/month — embody AI to write down content material and a collection of instruments to develop follower counts.

- A Bellwether: Google dad or mum Alphabet’s moonshot manufacturing facility, X, this week unveiled Challenge Bellwether, its newest bid to use tech to a few of the world’s largest issues. Right here, which means utilizing AI instruments to establish pure disasters like wildfires and flooding as shortly as doable.

- Defending children with AI: Ofcom, the regulator charged with implementing the U.Ok.’s On-line Security Act, plans to launch an exploration into how AI and different automated instruments can be utilized to proactively detect and take away unlawful content material on-line, particularly to defend youngsters from dangerous content material.

- OpenAI lands in Japan: OpenAI is increasing to Japan, with the opening of a brand new Tokyo workplace and plans for a GPT-4 mannequin optimized particularly for the Japanese language.

Extra machine learnings

Picture Credit: DrAfter123 / Getty Photos

Can a chatbot change your thoughts? Swiss researchers discovered that not solely can they, but when they’re pre-armed with some private details about you, they will really be extra persuasive in a debate than a human with that very same data.

“That is Cambridge Analytica on steroids,” mentioned challenge lead Robert West from EPFL. The researchers suspect the mannequin — GPT-4 on this case — drew from its huge shops of arguments and info on-line to current a extra compelling and assured case. However the final result form of speaks for itself. Don’t underestimate the ability of LLMs in issues of persuasion, West warned: “Within the context of the upcoming US elections, persons are involved as a result of that’s the place this sort of expertise is at all times first battle examined. One factor we all know for certain is that folks will likely be utilizing the ability of enormous language fashions to attempt to swing the election.”

Why are these fashions so good at language anyway? That’s one space there’s a lengthy historical past of analysis into, going again to ELIZA. Should you’re interested in one of many individuals who’s been there for lots of it (and carried out no small quantity of it himself), take a look at this profile on Stanford’s Christopher Manning. He was simply awarded the John von Neuman Medal; congrats!

In a provocatively titled interview, one other long-term AI researcher (who has graced the TechCrunch stage as effectively), Stuart Russell, and postdoc Michael Cohen speculate on “How one can maintain AI from killing us all.” Most likely an excellent factor to determine sooner reasonably than later! It’s not a superficial dialogue, although — these are sensible folks speaking about how we are able to really perceive the motivations (if that’s the precise phrase) of AI fashions and the way rules should be constructed round them.

The interview is definitely concerning a paper in Science revealed earlier this month, wherein they suggest that superior AIs able to performing strategically to realize their targets, which they name “long-term planning brokers,” could also be unimaginable to check. Primarily, if a mannequin learns to “perceive” the testing it should cross so as to succeed, it could very effectively study methods to creatively negate or circumvent that testing. We’ve seen it at a small scale, why not a big one?

Russell proposes proscribing the {hardware} wanted to make such brokers… however in fact, Los Alamos and Sandia Nationwide Labs simply obtained their deliveries. LANL simply had the ribbon-cutting ceremony for Venado, a brand new supercomputer meant for AI analysis, composed of two,560 Grace Hopper Nvidia chips.

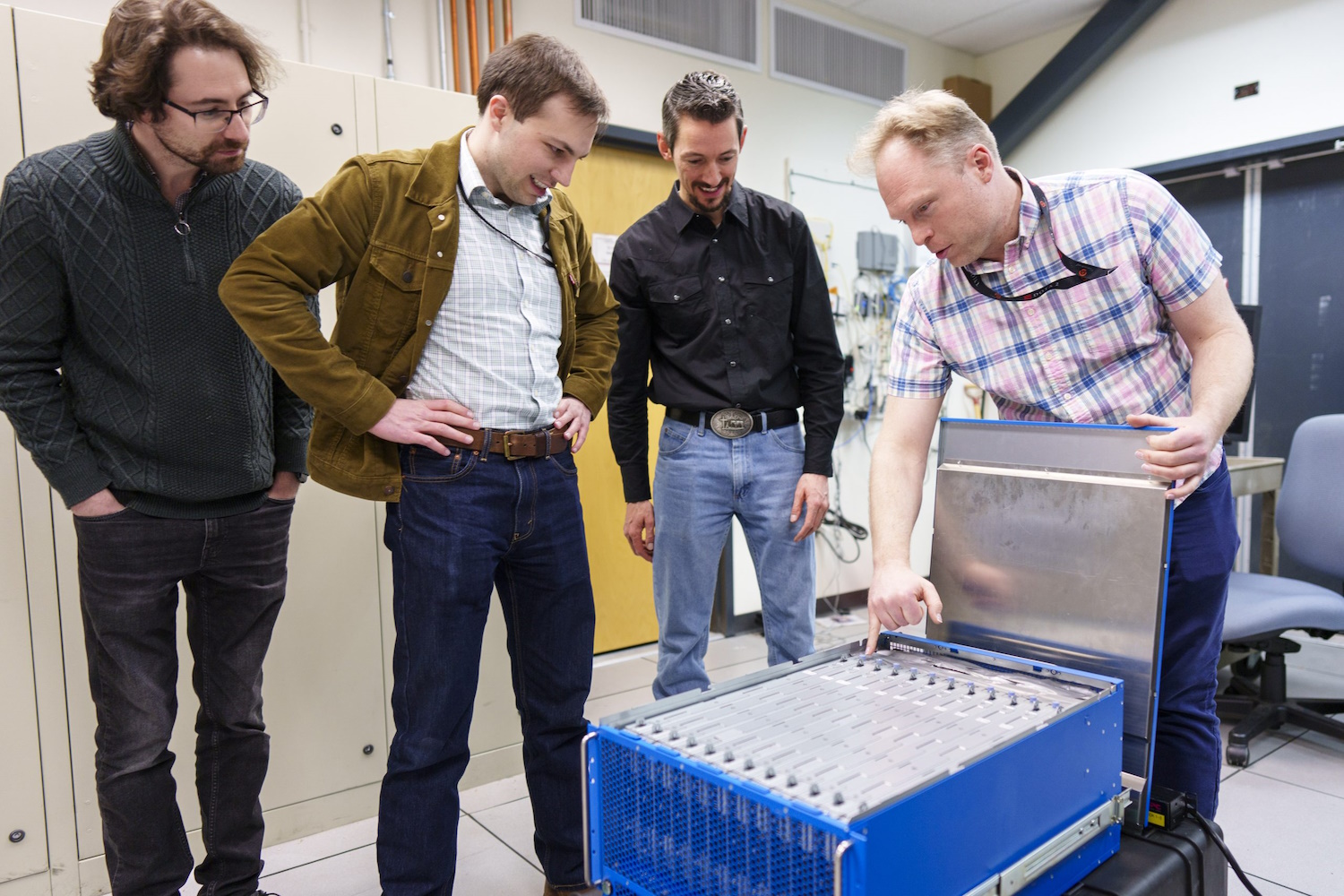

Researchers look into the brand new neuromorphic pc.

And Sandia simply obtained “a unprecedented brain-based computing system referred to as Hala Level,” with 1.15 billion synthetic neurons, constructed by Intel and believed to be the biggest such system on this planet. Neuromorphic computing, because it’s referred to as, isn’t meant to interchange methods like Venado, however to pursue new strategies of computation which are extra brain-like than the reasonably statistics-focused method we see in trendy fashions.

“With this billion-neuron system, we could have a chance to innovate at scale each new AI algorithms which may be extra environment friendly and smarter than current algorithms, and new brain-like approaches to current pc algorithms corresponding to optimization and modeling,” mentioned Sandia researcher Brad Aimone. Sounds dandy… simply dandy!

[ad_2]