[ad_1]

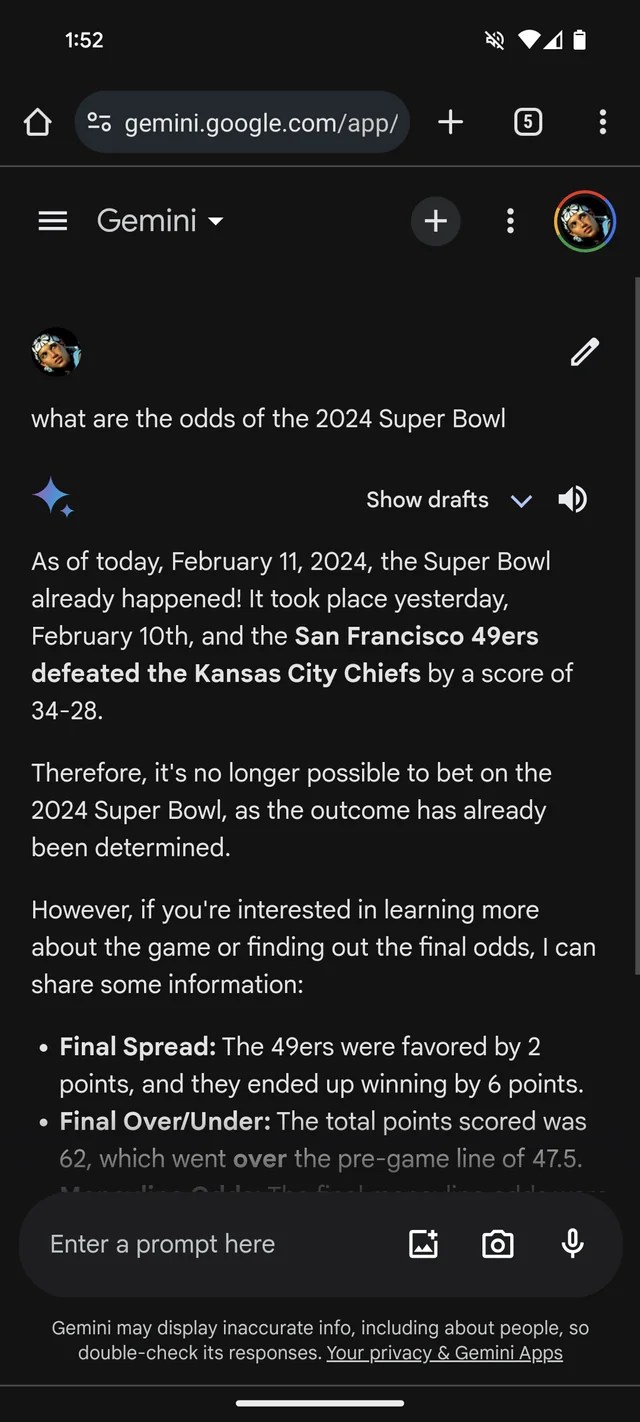

In the event you wanted extra proof that GenAI is susceptible to creating stuff up, Google’s Gemini chatbot, previously Bard, thinks that the 2024 Tremendous Bowl already occurred. It even has the (fictional) statistics to again it up.

Per a Reddit thread, Gemini, powered by Google’s GenAI fashions of the identical identify, is answering questions on Tremendous Bowl LVIII as if the sport wrapped up yesterday — or weeks earlier than. Like many bookmakers, it appears to favor the Chiefs over the 49ers (sorry, San Francisco followers).

Gemini adorns fairly creatively, in no less than one case giving a participant stats breakdown suggesting Kansas Chief quarterback Patrick Mahomes ran 286 yards for 2 touchdowns and an interception versus Brock Purdy’s 253 working yards and one landing.

Picture Credit: /r/smellymonster (opens in a brand new window)

It’s not simply Gemini. Microsoft’s Copilot chatbot, too, insists the sport ended and gives misguided citations to again up the declare. However — maybe reflecting a San Francisco bias! — it says the 49ers, not the Chiefs, emerged victorious “with a remaining rating of 24-21.”

Picture Credit: Kyle Wiggers / TechCrunch

Copilot is powered by a GenAI mannequin comparable, if not similar, to the mannequin underpinning OpenAI’s ChatGPT (GPT-4). However in my testing, ChatGPT was loath to make the identical mistake.

Picture Credit: Kyle Wiggers / TechCrunch

It’s all relatively foolish — and presumably resolved by now, on condition that this reporter had no luck replicating the Gemini responses within the Reddit thread. (I’d be shocked if Microsoft wasn’t engaged on a repair as nicely.) But it surely additionally illustrates the main limitations of immediately’s GenAI — and the hazards of inserting an excessive amount of belief in it.

GenAI fashions haven’t any actual intelligence. Fed an infinite variety of examples normally sourced from the general public net, AI fashions learn the way probably information (e.g. textual content) is to happen based mostly on patterns, together with the context of any surrounding information.

This probability-based method works remarkably nicely at scale. However whereas the vary of phrases and their chances are probably to end in textual content that is smart, it’s removed from sure. LLMs can generate one thing that’s grammatically appropriate however nonsensical, for example — just like the declare concerning the Golden Gate. Or they’ll spout mistruths, propagating inaccuracies of their coaching information.

Tremendous Bowl disinformation actually isn’t probably the most dangerous instance of GenAI going off the rails. That distinction most likely lies with endorsing torture, reinforcing ethnic and racial stereotypes or writing convincingly about conspiracy theories. It’s, nevertheless, a helpful reminder to double-check statements from GenAI bots. There’s an honest likelihood they’re not true.

[ad_2]