[ad_1]

CIOs and different know-how leaders have come to understand that generative AI (GenAI) use circumstances require cautious monitoring – there are inherent dangers with these purposes, and robust observability capabilities helps to mitigate them. They’ve additionally realized that the identical information science accuracy metrics generally used for predictive use circumstances, whereas helpful, are usually not fully ample for LLMOps.

In terms of monitoring LLM outputs, response correctness stays essential, however now organizations additionally want to fret about metrics associated to toxicity, readability, personally identifiable info (PII) leaks, incomplete info, and most significantly, LLM prices. Whereas all these metrics are new and essential for particular use circumstances, quantifying the unknown LLM prices is often the one which comes up first in our buyer discussions.

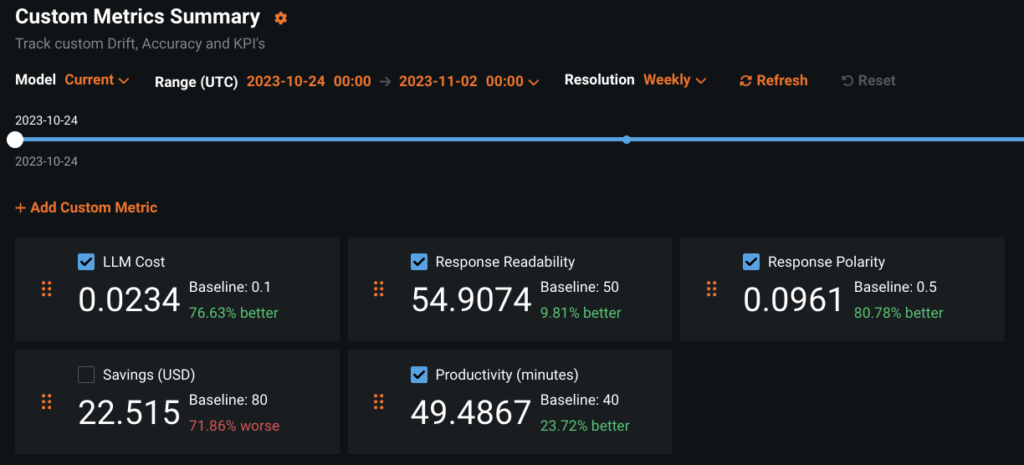

This text shares a generalizable strategy to defining and monitoring customized, use case-specific efficiency metrics for generative AI use circumstances for deployments which are monitored with DataRobot AI Manufacturing.

Keep in mind that fashions don’t must be constructed with DataRobot to make use of the in depth governance and monitoring performance. Additionally keep in mind that DataRobot provides many deployment metrics out-of-the-box within the classes of Service Well being, Information Drift, Accuracy and Equity. The current dialogue is about including your individual user-defined Customized Metrics to a monitored deployment.

For instance this characteristic, we’re utilizing a logistics-industry instance printed on DataRobot Neighborhood Github that you may replicate by yourself with a DataRobot license or with a free trial account. In the event you select to get hands-on, additionally watch the video beneath and assessment the documentation on Customized Metrics.

Monitoring Metrics for Generative AI Use Instances

Whereas DataRobot provides you the flexibleness to outline any customized metric, the construction that follows will provide help to slender your metrics right down to a manageable set that also gives broad visibility. In the event you outline one or two metrics in every of the classes beneath you’ll have the ability to monitor value, end-user expertise, LLM misbehaviors, and worth creation. Let’s dive into every in future element.

Complete Value of Possession

Metrics on this class monitor the expense of working the generative AI answer. Within the case of self-hosted LLMs, this might be the direct compute prices incurred. When utilizing externally-hosted LLMs this might be a operate of the price of every API name.

Defining your customized value metric for an exterior LLM would require information of the pricing mannequin. As of this writing the Azure OpenAI pricing web page lists the worth for utilizing GPT-3.5-Turbo 4K as $0.0015 per 1000 tokens within the immediate, plus $0.002 per 1000 tokens within the response. The next get_gpt_3_5_cost operate calculates the worth per prediction when utilizing these hard-coded costs and token counts for the immediate and response calculated with the assistance of Tiktoken.

import tiktoken

encoding = tiktoken.get_encoding("cl100k_base")

def get_gpt_token_count(textual content):

return len(encoding.encode(textual content))

def get_gpt_3_5_cost(

immediate, response, prompt_token_cost=0.0015 / 1000, response_token_cost=0.002 / 1000

):

return (

get_gpt_token_count(immediate) * prompt_token_cost

+ get_gpt_token_count(response) * response_token_cost

)Consumer Expertise

Metrics on this class monitor the standard of the responses from the angle of the supposed finish person. High quality will differ based mostly on the use case and the person. You may want a chatbot for a paralegal researcher to provide lengthy solutions written formally with a lot of particulars. Nevertheless, a chatbot for answering fundamental questions concerning the dashboard lights in your automobile ought to reply plainly with out utilizing unfamiliar automotive phrases.

Two starter metrics for person expertise are response size and readability. You already noticed above learn how to seize the generated response size and the way it pertains to value. There are a lot of choices for readability metrics. All of them are based mostly on some combos of common phrase size, common variety of syllables in phrases, and common sentence size. Flesch-Kincaid is one such readability metric with broad adoption. On a scale of 0 to 100, larger scores point out that the textual content is less complicated to learn. Right here is a simple approach to calculate the Readability of the generative response with the assistance of the textstat bundle.

import textstat

def get_response_readability(response):

return textstat.flesch_reading_ease(response)Security and Regulatory Metrics

This class incorporates metrics to observe generative AI options for content material that could be offensive (Security) or violate the regulation (Regulatory). The proper metrics to signify this class will differ significantly by use case and by the laws that apply to your {industry} or your location.

You will need to be aware that metrics on this class apply to the prompts submitted by customers and the responses generated by giant language fashions. You would possibly want to monitor prompts for abusive and poisonous language, overt bias, prompt-injection hacks, or PII leaks. You would possibly want to monitor generative responses for toxicity and bias as properly, plus hallucinations and polarity.

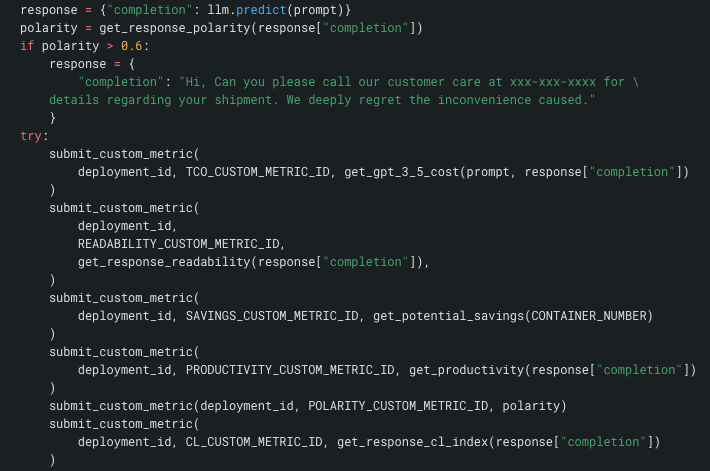

Monitoring response polarity is beneficial for guaranteeing that the answer isn’t producing textual content with a constant detrimental outlook. Within the linked instance which offers with proactive emails to tell clients of cargo standing, the polarity of the generated e mail is checked earlier than it’s proven to the top person. If the e-mail is extraordinarily detrimental, it’s over-written with a message that instructs the shopper to contact buyer assist for an replace on their cargo. Right here is one approach to outline a Polarity metric with the assistance of the TextBlob bundle.

import numpy as np

from textblob import TextBlob

def get_response_polarity(response):

blob = TextBlob(response)

return np.imply([sentence.sentiment.polarity for sentence in blob.sentences])Enterprise Worth

CIO are beneath rising stress to reveal clear enterprise worth from generative AI options. In a really perfect world, the ROI, and learn how to calculate it, is a consideration in approving the use case to be constructed. However, within the present rush to experiment with generative AI, that has not at all times been the case. Including enterprise worth metrics to a GenAI answer that was constructed as a proof-of-concept will help safe long-term funding for it and for the subsequent use case.

Generative AI 101 for Executives: a Video Crash Course

We will’t construct your generative AI technique for you, however we will steer you in the proper route

The metrics on this class are solely use-case dependent. For instance this, think about learn how to measure the enterprise worth of the pattern use case coping with proactive notifications to clients concerning the standing of their shipments.

One approach to measure the worth is to contemplate the typical typing velocity of a buyer assist agent who, within the absence of the generative answer, would kind out a customized e mail from scratch. Ignoring the time required to analysis the standing of the shopper’s cargo and simply quantifying the typing time at 150 phrases per minute and $20 per hour might be computed as follows.

def get_productivity(response):

return get_gpt_token_count(response) * 20 / (150 * 60)Extra probably the actual enterprise affect might be in diminished calls to the contact heart and better buyer satisfaction. Let’s stipulate that this enterprise has skilled a 30% decline in name quantity since implementing the generative AI answer. In that case the actual financial savings related to every e mail proactively despatched might be calculated as follows.

def get_savings(CONTAINER_NUMBER):

prob = 0.3

email_cost = $0.05

call_cost = $4.00

return prob * (call_cost - email_cost)Create and Submit Customized Metrics in DataRobot

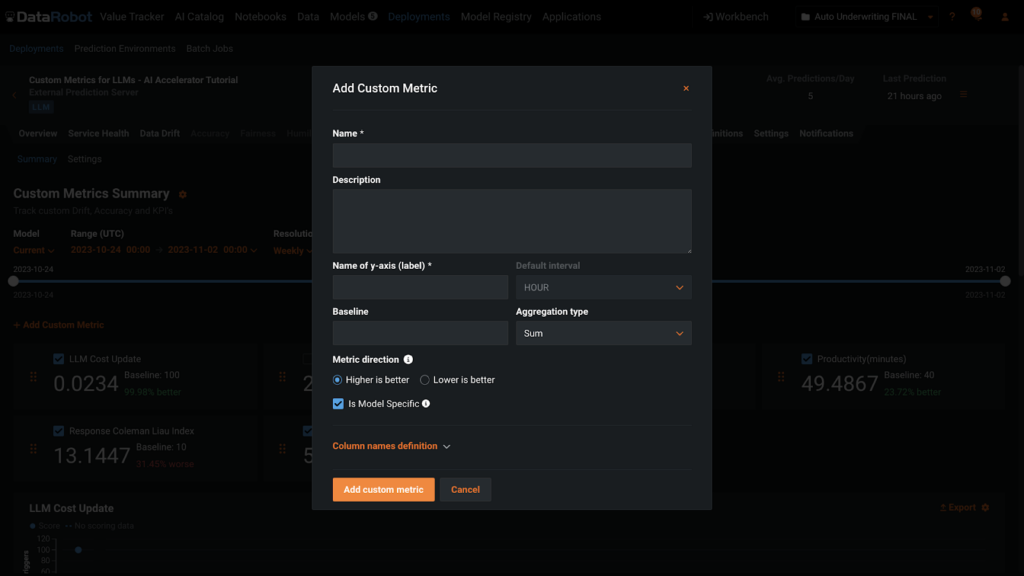

Create Customized Metric

Upon getting definitions and names to your customized metrics, including them to a deployment could be very straight-forward. You may add metrics to the Customized Metrics tab of a Deployment utilizing the button +Add Customized Metric within the UI or with code. For each routes, you’ll want to provide the data proven on this dialogue field beneath.

Submit Customized Metric

There are a number of choices for submitting customized metrics to a deployment that are lined intimately in the assist documentation. Relying on the way you outline the metrics, you would possibly know the values instantly or there could also be a delay and also you’ll have to affiliate them with the deployment at a later date.

It’s best follow to conjoin the submission of metric particulars with the LLM prediction to keep away from lacking any info. On this screenshot beneath, which is an excerpt from a bigger operate, you see llm.predict() within the first row. Subsequent you see the Polarity take a look at and the override logic. Lastly, you see the submission of the metrics to the deployment.

Put one other manner, there isn’t a manner for a person to make use of this generative answer, with out having the metrics recorded. Every name to the LLM and its response is totally monitored.

DataRobot for Generative AI

We hope this deep dive into metrics for Generative AI provides you a greater understanding of learn how to use the DataRobot AI Platform for working and governing your generative AI use circumstances. Whereas this text centered narrowly on monitoring metrics, the DataRobot AI Platform will help you with simplifying your complete AI lifecycle – to construct, function, and govern enterprise-grade generative AI options, safely and reliably.

Benefit from the freedom to work with all one of the best instruments and strategies, throughout cloud environments, multi functional place. Breakdown silos and stop new ones with one constant expertise. Deploy and keep secure, high-quality, generative AI purposes and options in manufacturing.

[ad_2]